In this episode we cover not only what hedge funds are, but one of the most recently used hedge fund allocation strategies: risk parity. The largest quantitative hedge funds are using this method and it is now presenting some real dangers. We use this example to touch upon how we can skeptically look at performance and also what to beware of with 13F filings. This episode synthesizes everything we’ve learned into a single practical episode.

Here is the script that was used in today’s episode.

Note: I don’t follow scripts word-for-word as they can sound unnatural, but the episodes do closely follow them.

Get excited listeners. We’re going to synthesize everything we’ve learned to create our first hedge fund strategy and go over what a hedge fund is. If you haven’t listened to the other episodes, that’s okay because this can be a good test to see if you need to brush up on anything. For the most part though, this will be a very simple explanation so relax and enjoy listening. Oh! And I even made an entirely new feature and initiative on Tiingo to aid in this episode. Actually, I had this podcast all scripted out and then I realized, “I should just make this hedge fund tool for everyone.” So… this is going to be a really fun episode.

I consider this an important episode because we’re going to be using some metrics we’ve learned about and touching upon new ideas like risk management and position sizing and what they mean. We’re also going to discuss criticisms of the hedge fund strategy we’re covering, which will give you a look into how we should all view markets and claims made by individuals. One of the most important skills you can develop as an investor and trader is skepticism.

Here is a fun story that upsets me quite often. I used to work at a big bank, and there was a Managing Director there. A managing director is the most senior title you can get at a bank before you get into CEO or CTO. In other fields it may be called a Principal, Partner, and so on. Point is, it’s a very high title. Well this MD, managing director not medical doctor, was followed across wall street because his research was popular. What the bank didn’t advertise was that this MD originally traded, but because he lost money for 7 years straight, they no longer allowed him to trade with bank money and instead allowed him to publish research because it helps their relationships with clients. Another fun point? Of the people who read his research, half of them mocked him and used him as a joke of everything wrong in market analysis. This MD would literally look at a price graph and then draw arrows. That’s it. He would circle things, and draw arrows where he thought things were going.

I rarely trash talk as you know in this podcast, but I bring up this example to highlight how important skepticism is. Even if you think somebody is a pundit or brilliant, fact checking is incredibly important. Misinformation is so dangerous because it means we can lose our money. It’s one thing if the misinformation is a genuine mistake and a person tried, it’s another if an institution knows a person had bad research yet still promotes him for sales. I will never stand for the latter and will continue to be vocal on this.

So to recap: always be skeptical. Even of me. Verify everything I say. I try my best but I am human so if you think I’m wrong, please check. If you don’t think I’m wrong, then definitely fact check me! Haha, that’s an important lesson!

OK moving on to some quick Tiingo announcements. This week we have revamped the entire fundamental database so it has the data structured in tables as well as graphs. The data is now also more accurate and had extensive coverage for over 3,500 stocks. Secondly, I have started the Tiingo Labs initiative, which contains a powerful tool you can use with this podcast. And thirdly, I just added a chat reputation system, as well as something called a Tiinglet. I realized some of the best converrsations among friends happen within chats, but we don’t have a way to save them down. I present a Tiinglet, it lets you turn your discussion about markets into something you formalize and give to the public to help others learn. If you open the Tiingo chat, click a username of a message you like, a box will come up and within a few clicks, you will make a site centered around your dialogue.

For example, if you and a friend are talking about Apple and one of you comes up with great analysis you think you could help others, then you can simply click the text and a message box comes up that lets you turn the conversation into page that is accessible to others who may have the same questions as you do.

In addition, if you like the Tiingo project – the mission, podcast, web app, and so on, please consider paying for Tiingo at http://www.tiingo.com/support once again www.tiingo.com/support. I have a pay what you can model so nobody is excluded, but in order to exist, we will need people to pay for the product.

So let’s move on into our first hedge fund strategy!

To begin let’s discuss what a hedge fund actually is and how news can often misinterprets what they do.

A hedge fund’s goal is to make money that’s uncorrelated to other assets like stocks, bonds, and so on. Think of it as if you invested in real estate. If you bought a condo,you probably wouldn’t compare it to stocks. In fact, many times people invest in property to build equity or have other investments besides stocks and bonds.

So it’s not so much hedge funds have to make more money than the stock market like the S&P 500 or NASDAQ index funds, but that they have to have a return stream that differs from those. They are a tool used by pension funds, wealthy people, banks, other institutions, and so on to diversify away their risk. For example, if you had 10 billion dollars, stocks and bonds may be nice, but you may want to have other investments too like real estate. So think of a hedge fund as a tool used by wealthy investors to diversify away some of their risk.

You may often see headlines that say, “the stock market returns 20% this year, but hedge funds only returned 12%.” But that’s not a bad thing. A hedge fund’s goal isn’t to beat stocks, it’s be uncorrelated for stocks. For example, if stocks were up 20% and a hedge fund was up 20%, and if stocks were down 10% and a hedgefund was down 10%, why would you pay fees to a hedge fund when you could own an index fund?

So to create strategies uncorrelated to the stock market or bond market, a hedge fund will trade in different styles. They are considered active managers. They also have a tool called leverage. This simply means they can borrow money. If they have $10,000, they may trade as if they had $50,000. They can also sell short, a topic we covered in Q&A. This differs significantly from mutual funds and index funds, which tend not to really use leverage in the same way, and also mutual funds and hedge funds don’t sell short. Because of this, hedge funds are often classified as an “alternative investment.” They are alternatives to traditional assets like stocks and bonds. They manage money in what is considered non-traditional ways.

Some hedge funds may be long a stock while being short another stock. This is called a long/short equity fund. Others may trade commodities or fx, and these are often called global macro funds. Some hedge funds employ quantitative strategies where they build computer programs that decide what to invest in.

One problem you see in the

The fee structure for a hedge fund is often more aggressive than a mutual fund or index fund. It’s typically assumed a fund takes 2/20 (2 and 20) or maybe you will see 1.5/15. Let’s use 2/20 as an example. The first number, 2, is the management fee. This is similar to a mutual fund. If you invested $1mm, you would pay 2% of what you invested. IN this case it would be 2% of $1mm, or, $20,000. The second number, 20, is the cut they get based on performance. For example, if they make 15% on $1mm, or $150,000, they will get a cut of that $150,000. The second number represents the % cut they get. So if it’s 20%, they would get 20% of $150,000 which is $30,000. So 2/20 (2 and 20), is a 2% management fee on what’s invested, and a 20% performance fee which is shaved off the additional money they make. If the hedge fund doesn’t make money, or losses money, they still get the management fee but do not get the performance bonus. They get the 2% but not the 20%.

So a hedge fund is a pooled investment, like a mutual fund or index fund, but they take investor’s money and then use alternative strategies to make money in different ways. Their goal is to make money regardless of market conditions while also being uncorrelated to other assets. As usual this should be the case, but often time isn’t.

Anyway, this is what a hedge fund is. It often has a mystique to it like hedge fund traders are brilliant. But just like any profession, you have people who are very good, and others who may not be so good. Often I find the media portrays hedge fund managers, especially quants, as these super brilliant mathematicians. Having gone to that side, I can assure you…unless it’s High frequency trading, the Ph.D.s and the chess champions don’t make a difference. They’re just normal people that are incredibly passionate about markets.

Now that we know what a hedge fund is, we are going to discuss a popular strategy using the knowledge we’ve gained. We need to understand volatility, correlation, and stock indexes and etfs.

So a hedge fund takes a non-traditional approach to investing. Do not try what we’re discussing at home. There are a lot of caveats to a strategy like this, some of which we’ll get into, but making sure this is done right takes a lot of practice. I don’t want to be responsible for any execution errors or mishaps. This strategy is not guaranteed to make money, and in fact could very well lose you money. Anyway, with this very scary, yet important disclaimer aside, let’s move forward, woo-hoo!

We’re going to discuss a strategy called a risk-parity strategy. Actually, risk-parity is not a strategy but an allocation method. That simply means, it’s a method to determine how much money you should put in each asset you own. What I mean by that is if you own a stock index fund and a bond index fund, how much should you put in each? In episode 3 we discussed two different ways to determine this, one was simply always keeping 60% of your cash in stocks, and 40% of your cash in bonds. We spoke about how this is naïve because it stays the same regardless of other factors. For example, if you are younger, you may be able to take greater risks, which will let you be in more stocks.

In the same way, a risk parity strategy helps you decide how much to put in each stock. We’re going to use the 60/40, 60% stock, 40% bond, portfolio as an example for this strategy.

So a big trend among large hedge funds, like AQR and bridgewater, is to determine how much to put in each asset using a risk-parity strategy. They may add a few twists to the idea, but at it’s base core, a lot of it is determined by this method.

So what is risk parity? Well it simply means equal-volatility weighting your portfolio. Before you shut off this podcast, I will actually explain what that means. I can’t stand when people define terms using equally difficult terms or phrases so I won’t do that to you.

So you know how in the 60/40 stock/bond portfolio 60% of our cash was in stocks, and 40% was in bonds? Well we generally assume stocks move around a lot more than bonds do. Bonds are assumed to be a bit more stable. This is a concept we call volatility. We say, on average, stocks are more volatile than bonds. Typically, many people measure risk as volatility. Something that moves around a lot, could be said to be more risky. So sometimes volatility and risk are sometimes said to be synontmous. SO breaking down the term, risk parity, we can say volatility-parity. And parity means for something to be equal. Using these definitions, we can say “risk parity” roughly translates to “volatility equal”, or more naturally, “equal volatility.” Risk parity means equal volatility.

But what does that mean practically? A common example is if you take a 60/40 stock/bond portfolio, and measure the volatility, we see 90% of the volatility comes from stocks, and 10% of the volatility comes from bonds. Going forward we are going to use the term “cash.” This means exactly that. If we put 60% of our cash in something, it means if we had $1,000, we would take $600 and invest it in stocks. We would then take $400 and put that in bonds. a 60/40 portfolio is 60% cash in stocks, 40% cash in bonds.

If we took 60% of our cash and put it in stocks, and 40% of our cash and put it in bonds, 90% of the movement would come from stocks. Only 10% of the movement would come from bonds. Because stocks are said to be higher risk, or higher volatility in this case, they would make up 90% of the risk in your portfolio, even if they were only 60% of the cash.

So what risk parity says is that we should make stocks only take up 50% of the risk, and bonds make up 50% of the risk. If 60% cash results in 90% risk, how much would we have to scale back? Well if we put 33% of our cash in stocks, that would make the portfolio take up 50% of the risk.

What about bonds? Well since 40% cash results in 10% risk, if we multiply our bond position by 5, we can get 50% risk. That means we have to take the 40% cash position/10% risk position, and multiply both by 5. We can see that 200% cash in bonds results in 50% risk.

But how do we put 200% of our cash in something? Well this is a concept called leverage. This is something hedge funds can do as we mentioned earlier, they can essentially borrow money to multiply their returns. Individuals can do this too through margin and futures, but we’re not going to cover this here quite yet as this is a more advanced topic and has serious risks involved.

So to recap, in order to take a 60/40 stock/bond cash portfolio, and make the portfolio 50/50 in volatility/risk, we have to cut the position of stocks and lever up the position in bonds.

Notice how we are using the volatility of an asset to determine how much to allocate? This is a dynamic method, and no different than if we did 60/40 or another allocation method. So risk parity just tells us how much to put into each asset. The strategy will tell you what assets, and risk parity will tell you how much to put in each asset.

So let’s get down to it, how much would this strategy make vs a 60/40 strategy? And here is where things are gonna get SO fun.

The risk parity strategy returned 45% total over the past 12 years. The 60/40 portfolio returned 61% total. This wasn’t assuming reinvesting dividends for those wondering – if you want to ask my why shoot me an E-mail.

So you may be thinking,”Rishi you said this was profitable…but I would make less? What is wrong with you.” Well here is the key information and why hedge funds can do this better than 60/40. We have to look at how this strategy performed relative to the path it took. In episode 5 we talked about volatility and how the path to the return we got matters. For example, if invested $100,000 and doubled our money to $200,000 that’s awesome. But what if half way through that $100,000 turned into $50,000?

Likewise, what if you invested $100,000 and made $150,000 but the lowest your portfolio ever got was $99,000. Which would you prefer? Even if you’re telling me the down $50,000 scenario, here is why it’s still worse if you’re a hedge fund.

The risk parity strategy had a volatility of about 5.5%. The volatility of the 60/40 was about 11%, almost double. So what a hedge fund will do is that they will apply even more volatility, because investors want a higher return. So to compare apples to apples, a hedge fund may use leverage and double the amount of money into risk parity, so you take that volatility of 5.5% and double it, and now you have 11% volatility. But you also have double the return.

So if we want to compare apples to apples, we should also compare the volatility, or the path it took us to get to the return we have. So if you double the leverage to a strategy, you not only double volatility but the return. So that 45% we made on risk parity becomes 90%. 90% on risk parity vs. 61% on a 60/40 portfolio. There are a bit more nuances to this strategy that actually improve performance of risk parity, but we’ll get to that soon enough in this podcast series.

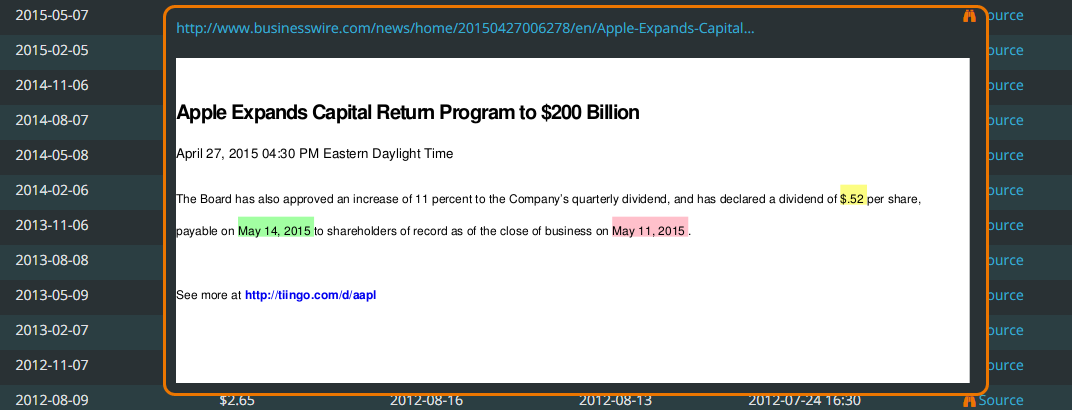

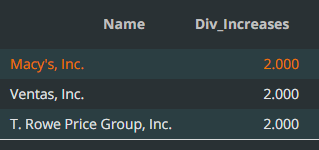

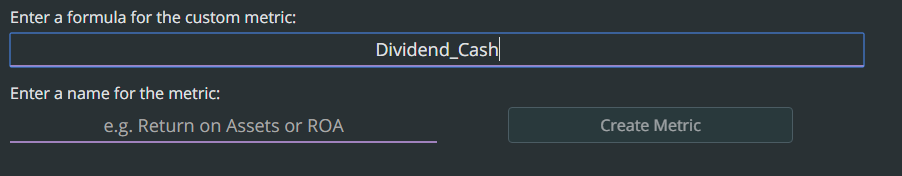

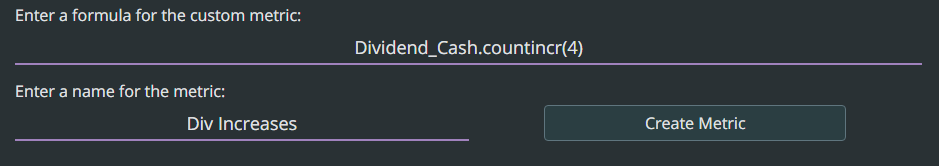

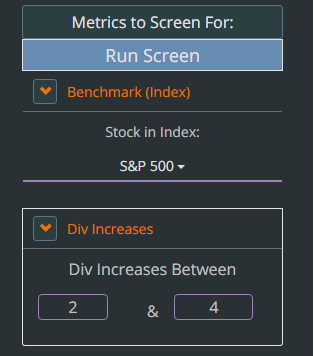

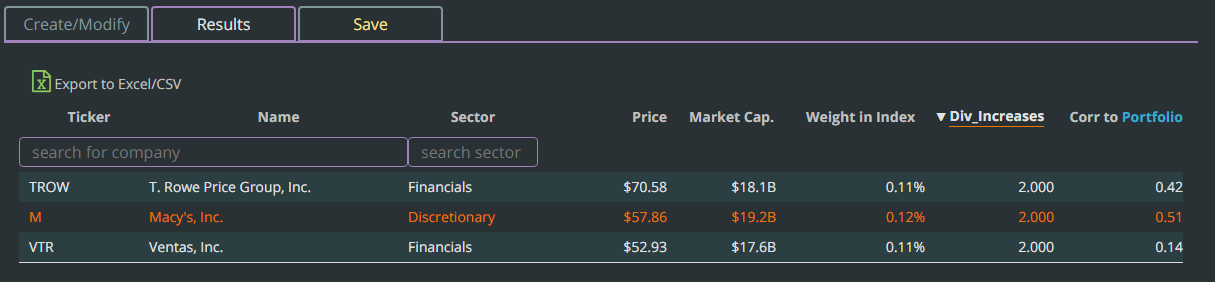

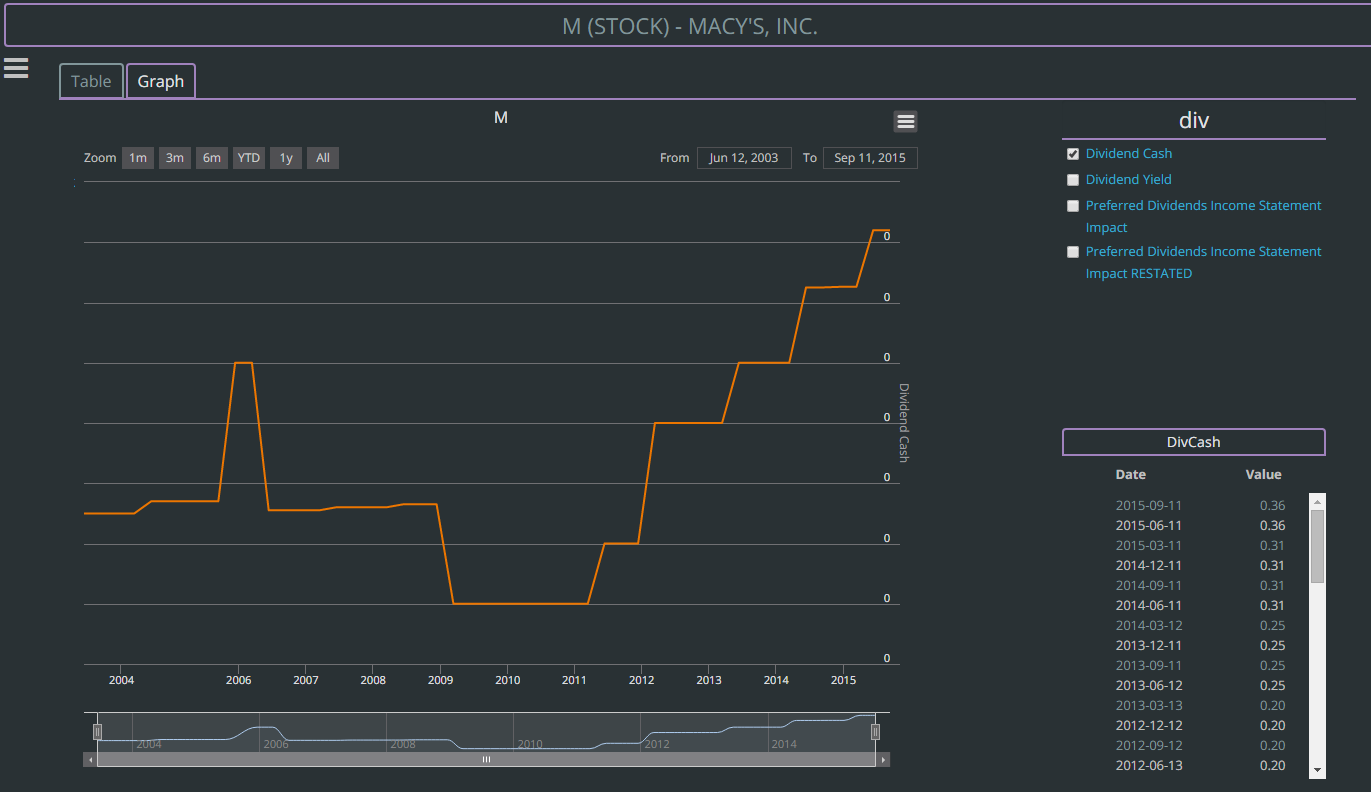

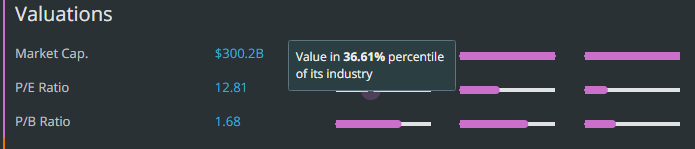

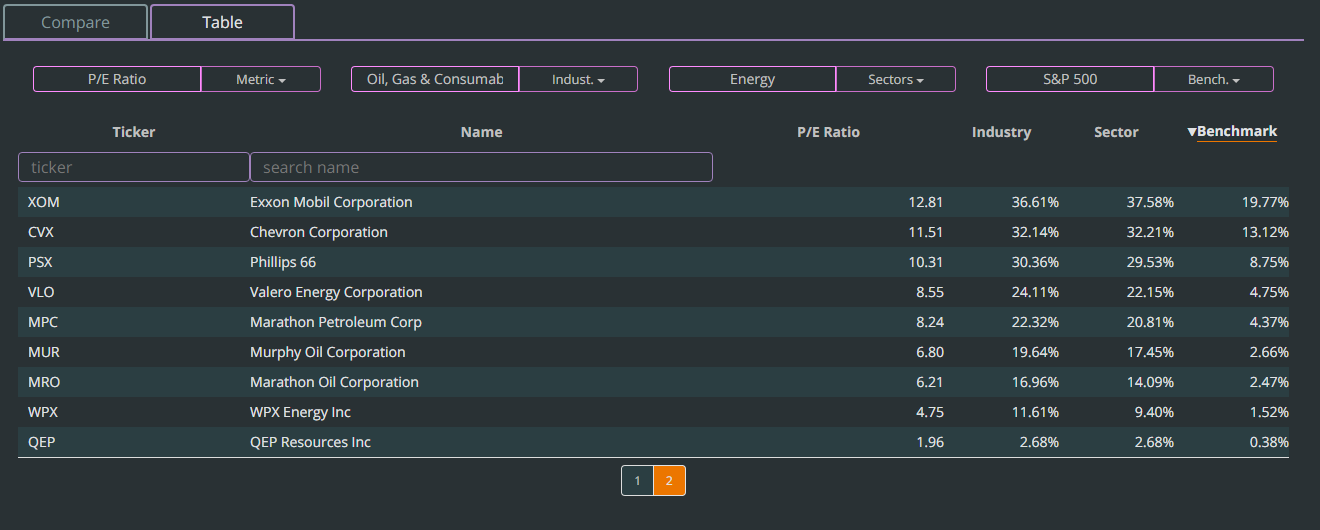

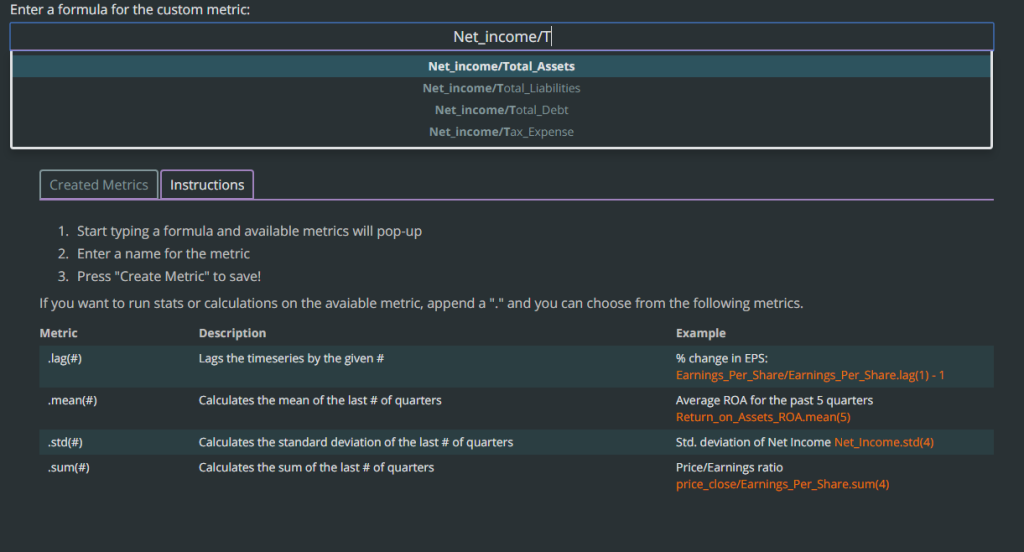

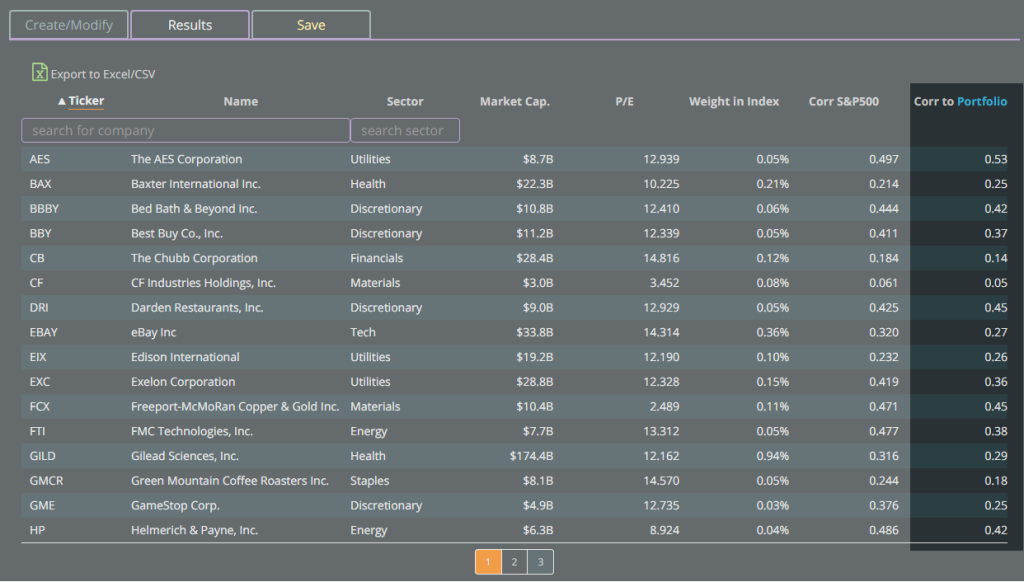

If you want to play with this risk parity allocation method, I mentioned I created a tool to help you do this. Know this is an informational tool and you should not trade on the results. I have not put in tradeable assumptions, but this is a good informational off-the-cuff proof of concept. And please treat it as such, it’s not a full replication of the strategy nor how much you should invest. So with that diclaimer, check out the tool on Tiingo.com/labs. You’ll see a link that has risk parity. This is a sweet tool that may let you get an idea. You just type in the tickers you want in your portfolio and press enter. Maybe you want to include the S&P500, bonds, but also small cap stocks? But anyway, the possibilities are endless and I hope you find joy and fun is playing around with this!

The next question we have to ask ourselves, is why does this strategy perform so well?

This is where skepticism in markets is so critical. If a strategy performs very well, it’s important to ask ourselves why? What conditions are allowing it to perform so well? Is it the economy, maybe government policy? Certain changes in technology?

In this case, the common explanation of why risk parity does so well is especially from the bond market. In the U.S., for the past 30 years, bonds have done extremely well. They’ve never really gone down for an extended period of time like stocks have. And after the 2008 crises, the Federal Reserve, which sets an interest rate that bonds are affected by have gone down. The Federal Reserve, or Fed, did this to promote credit and boost the economy. We will get into how that works later, but the take away is that fed policy has allowed rates, like loan or mortgage rates, to stay low. Not only that, awhile ago the Fed committed to doing that for awhile.

In Episode 5 we mentioned how uncertainty creates volatility. Well, when a book government agency that influences rates says, “we’re going to do this for a long time” it removes a lot of uncertainty. This in turn removes volatility from bonds.

So what we’ve seen are that bonds are performing very well, the price goes up. If you’re new to bonds, it’s said the price of bonds is inversely proportional to the interest rate. What that means is that if rates, like you see on loans, goes down, the bond is worth more. We will cover this more in depth later, but if rates are up, bond prices are down. If rates are down, bond prices are up.

So since the Fed committed to keeping rates low, you’ve seen bond prices go up. Secondly, you’ve seen a lot of uncertainty removed in the bond market, resulting in low volatility. And since risk parity equal-volatility weights, in order for the volatility to be 50-50 stocks and bonds, hedge funds have bigger positions in bonds.

So the argument against risk parity is that it applies an unfair amount of leverage to bonds. To mitigate this, hedge funds look at the volatility every month, and do what we call “rebalance.” If volatility was higher for an asset the previous month, they will put less money in the asset the next month. Every month they make take the average volatility for the past 3 months and add or reduce their position in each asset.

However, rebalancing happens once a month. What if the price of bonds fell quickly within a month. Let’s explore it for a moment.

In our example, we borrowed double our money to invest in bonds. Let’s say we had $1,000 and borrowed another $1,000. The $1,000 we had is our equity. So if bonds fell 50%, we would lose 50% of the combined value of $2,000. We would lose half, so $1,000, which would completely wipe out our equity.

If we levered 300%, so we had $1,000, but borrowed another $2,000, a 33% fall in bonds would be a loss of $1,000 and wipe out our equity. The equity is what we actually have, so if we lose all of it, we go bankrupt.

The truth is though, with hedge funds, if they are down 20%, investors get scared and often pull their money away. If your mutual fund was down 20%, you would probably rethink the investment.

Right now, a big worry among investors in hedge funds is that the economy has been doing pretty well. So when is the Fed going to allow interest rates to rise? And what if it happens really quickly? If rates rise quickly, the price of bonds will fall quickly. And remember, the top hedge funds are using this strategy and they manage $200bn among the top few alone. If these $200bn is highly levered, imagine how many billions could be wiped out if bonds just fall 10%.

This is similar to the 2008 crises. Many people were hurt because banks were offering low down-payment mortgages, and that just means people levered. a 10% down payment means you are levered 10:1, or 1000% on your equity. If your house dropped 10%, you were wiped out. This is what hurt people.

In the same way, a big worry is that because funds are so levered on bonds, if they fall in price, you could see billions of dollars wiped out. Funds have tried to come out and recognize this problem and are taking steps to address it. I wont comment specifically if I think these steps are appropriate, at least not publicly, so if you want to have that discussion, shoot me an E-mail!

The caveat though is that leverage needs to be used appropriately, and many people think the reason this strategy has done so well is because bonds have done incredibly well over the past 20 or 30 years. Stocks have also done very well in the past 6 years, so this keeps adding to the returns of the strategy.

These are the drawbacks and everytime you see performance numbers, always ask yourself why? Asking why an opportunity exists is not just a powerful tool in business, but also markets. Maybe there is a reason this strategy works that may make you feel uncomfortable.

Either way, I know this is a lot to take in, so if you have to repeat a few parts, I apologize. But this example is truly an expression of how we can combine the things we learned so far into a strategy the largest hedge funds are using.

This has been fun and if you have any feedback please E-mail me at [email protected]